How Much Does It Cost to Train an AI Model?

A practical guide to AI training costs in 2026, including GPU pricing.

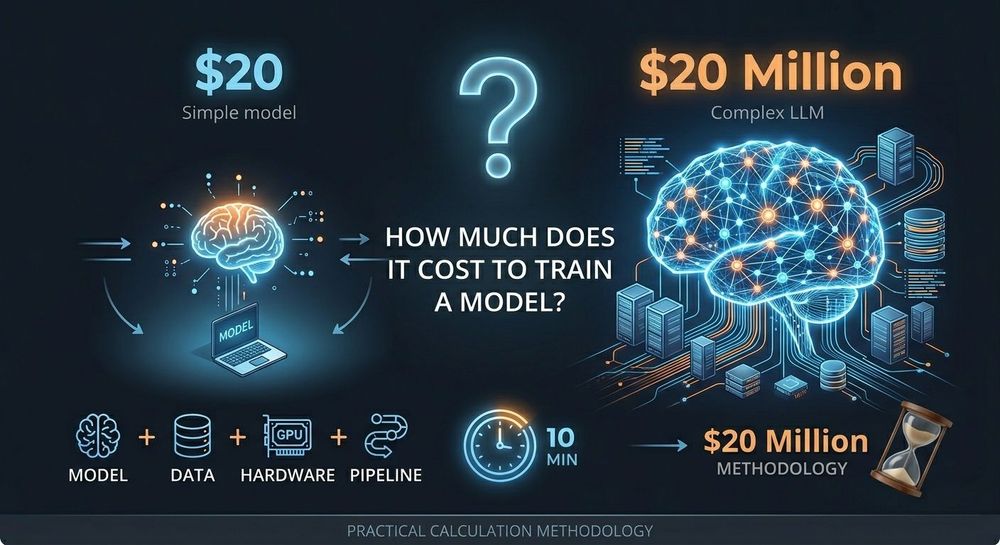

The question sounds simple: “How much does it cost to train an AI model?” The honest answer is anywhere from $20 to $20 million — and both numbers can be completely accurate.

The difference comes down to a few core factors: model size, dataset scale, GPU infrastructure, and how efficiently the training pipeline is configured. A small fine-tuning project for an internal chatbot may cost less than a monthly SaaS subscription, while large-scale foundation model training can consume millions of dollars in compute resources.

This guide focuses on practical budgeting rather than vague estimates. The goal is to help teams understand how to evaluate training costs realistically before launching expensive experiments.

Why AI Training Costs Vary So Much

When companies first start working with GPU infrastructure, they often underestimate how many independent variables affect pricing. The cost of training is not determined by the model alone. It also depends on the amount of data, the number of training epochs, GPU efficiency, and the quality of the engineering pipeline itself.

In practice, poorly optimized code is one of the biggest sources of overspending. Inefficient data loading, unnecessary precision levels, incorrect batching, or improperly configured distributed training can double or even triple GPU usage. Many teams also overprovision hardware, renting H100 clusters for workloads that would run perfectly well on A100s or RTX 4090s.

Another common issue is idle infrastructure. GPU instances continue billing while engineers configure environments, debug CUDA issues, or leave training servers running overnight. For some projects, idle time alone accounts for 30–40% of the final invoice.

Instead of relying on rough estimates, experienced teams usually calculate costs using a simple framework based on GPU-hours.

The Core Formula Behind AI Training Costs

At its simplest, the calculation looks like this:

Training Cost = GPU Hours × GPU Rental Price

The challenge lies in estimating GPU-hours accurately. In real-world workloads, GPU consumption depends on the amount of computation required by the model, the size of the dataset, the number of epochs, and the efficiency of the hardware being used.

Most ML teams do not calculate this mathematically from scratch. Instead, they run a short pilot experiment using a small percentage of the dataset and extrapolate total runtime from those results. This approach is far more reliable than theoretical estimates alone.

Beyond raw GPU pricing, several hidden infrastructure costs also affect the final budget. Large datasets require storage, checkpoints for large language models can consume hundreds of gigabytes, and cloud providers often charge separately for outbound bandwidth. Debugging time, failed experiments, and interrupted spot instances can increase costs significantly if the pipeline is not designed properly.

Real-World Training Cost Examples

A common business use case today is fine-tuning a 7B parameter model such as Mistral using company-specific documents or support tickets. With LoRA or QLoRA, this workload can run efficiently on a single RTX 4090 or A100 GPU. Typical training time ranges from 8 to 15 hours, and total infrastructure costs often stay below $50 even with multiple experiments included. For many businesses, modern AI fine-tuning is now surprisingly affordable.

Training a mid-sized model from scratch is more expensive but still manageable for startups and research teams. A 1–3B parameter model trained on a specialized dataset usually requires a cluster of 4–8 A100 GPUs with distributed training frameworks such as DDP or FSDP. Depending on optimization quality and dataset size, projects in this category typically cost between a few hundred and a few thousand dollars.

Large-scale training changes the economics entirely. Fine-tuning or training models in the 30B–70B range generally requires H100 clusters, advanced memory optimization, mixed precision training, and distributed checkpointing strategies. Even relatively short runs can consume hundreds of GPU-hours. In production environments, budgets for these workloads often range from several thousand dollars to well into six figures.

Estimated AI Training Costs in 2026

| Task Type | Recommended GPU | Estimated GPU Hours | Estimated Cost |

|---|---|---|---|

| 7B Fine-Tuning (LoRA) | 1× RTX 4090 / A100 | 8–20 | $5–30 |

| 13B Fine-Tuning | 1–2× A100 80GB | 20–50 | $30–150 |

| 1–3B Training from Scratch | 4–8× A100 80GB | 200–500 | $250–1,500 |

| 30B–70B Full Fine-Tuning | 8–16× H100 | 300–800 | $1,000–5,000 |

| 7B Training from Scratch | 8× A100 / H100 | 1,500–3,000 | $2,500–10,000 |

| 30B+ Training from Scratch | 32–128× H100 | 10,000+ | $30,000+ |

How Companies Reduce Training Costs

The biggest savings usually come from infrastructure decisions rather than model changes. Many workloads do not actually benefit enough from H100 GPUs to justify the higher hourly pricing. For models below roughly 13B parameters, A100 GPUs often provide a better balance between speed and cost.

Spot and preemptible instances can reduce compute expenses dramatically, although they require reliable checkpointing to avoid losing progress during interruptions. Teams that optimize their pipelines properly can often reduce total costs by 40–60% without sacrificing model quality.

Modern optimization techniques also play a major role. Mixed precision training reduces memory consumption while increasing throughput, gradient checkpointing allows larger models to fit into memory, and LoRA-based fine-tuning can shrink compute requirements by an order of magnitude compared to full fine-tuning. For most enterprise applications, these methods are more than sufficient.

Another major factor is provider pricing. The exact same H100 GPU may cost $2/hour on a specialized GPU marketplace and over $7/hour on a hyperscale cloud platform. The hardware itself is identical — the difference comes from enterprise support layers, integrated cloud ecosystems, and SLA guarantees that many AI teams simply do not need.

Final Takeaway

AI training costs are no longer determined solely by hardware availability. They are largely a function of infrastructure strategy, engineering efficiency, and provider selection.

The same experiment can cost $50 on an optimized pipeline using reasonably priced GPU infrastructure, or several hundred dollars on an inefficient setup running in an overpriced cloud environment. That gap is not unusual — it is now a standard part of the AI infrastructure market.

The most effective way to control costs is straightforward:

- Choose GPUs based on workload requirements, not hype

- Optimize the training pipeline before scaling infrastructure

- Compare GPU providers carefully before committing to long runs

It is also important to understand that AI training costs are not fixed. Pricing constantly changes depending on GPU availability, infrastructure demand, and the overall efficiency of the engineering pipeline. Even small improvements in workload optimization or provider selection can reduce expenses dramatically without affecting model quality or performance. This is one of the main reasons many companies are moving away from traditional cloud platforms toward specialized GPU marketplaces that offer more flexibility and better pricing transparency. In 2026, the ability to manage AI infrastructure efficiently is becoming just as important as the quality of the models themselves.